Brain Reading as The Mind Reading Technology

Artificial intelligence is being used by Mind Reading Technology to decipher human MRI images, according to the findings of the research team behind the project. In a sense, their video-viewing habits amount to a kind of mind-reading. It’s about the way the human brain processes visual information.

These developments have the potential to inform future research into brain function and artificial intelligence. Convoluted neural networks are a form of algorithm that has shown useful in recent years of study. It’s crucial to the ability of computers and cellphones to identify people and things.

Mind Reading Technology Wireless

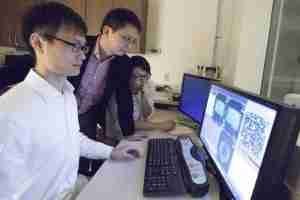

In recent years, this form of network has had a significant influence on computer vision. Weldon School of Biomedical Engineering assistant professor Xingming Liu.

He also repeats this mantra throughout Purdue’s ECE department. We employ neural networks to decipher what you’re seeing, he explains. Sensory neural networks, a kind of “deep-learning,” have been applied to the study of cognitive processes. The procedure involves the interpretation of still photographs and other physiological inputs.

New discoveries, however, represent the first period such a technique has been used. It examines the brain’s reaction to realistic film situations. A breakthrough in understanding the human brain’s code for constructing a dynamic visual world while dealing with the inherent complexity of human beings. The PhD student in Haiguang, Wen, is told. Plus, his new study may be found in the online edition of Cerebral Cortex.

Brainwave-Reading Gadget

Every one of three female patients spent 11.5 hours in an MRI scanner while viewing 972 short videos. Pictures of humans or animals doing things or being in natural settings. At first, the information was utilised to educate dependable neural network models that could foretell activity there in visual cortex in the brain as people watched films. Without ever having seen the model before, they were able to use it to interpret MRI data from participants and recreate the movie.

Read Also: AI Software to Evaluate Brain Maturity Model

The model successfully decoded MRI data within certain picture ranges. Afterwards, the raw video footage was shown alongside an AI interpretation of the brain relied on MRI scans.

Examples include a water creature, the moon, a turtles, a human, and a flying bird. One thing that sets our study apart, I told the researcher, is that we are practically decoding in real time. Because the test volunteers are observing themselves on camera. Every two seconds, we do a brain scan, and the model faithfully reproduces the unfolding scene.

A Brain-Reading Device for 2021

Researcher were able to deduce which regions of the mind were activated in response to certain visual stimuli. According to Wayne, neuroscientists are now working to identify which regions of the brain are responsible for particular tasks. This has long been a motivation for neuroscientists.

In my opinion, the findings we provide in this research get us one step closer to that target. The brain breaks down a situation involving an automobile passing a building into its component parts. A automobile may be thought of as a place in the brain. The building can be a metaphor for another place.

Our technology allows you to see in vivid detail the data stored in any given region of your brain. And filter it all via the visual cortex’s many regions. By breaking down a scene into its component parts and then reassembling them, you may get insight into how your brain processes visual information.

Methods of Reading the Mind

A human subject’s data was used to train a model, which the researchers then used to gain insight. One human subject’s brain activity is deciphered at a time. Encoding and decoding across subjects is the term for this procedure. The discovery is significant. Because it indicates the viability of using such models to investigate brain activity even for persons with visual impairments, which might have far-reaching implications.

It seems that the field of artificial intelligence and neuroscience is about to enter a new age. Studying how these two important fields interact is a primary goal. According to Liu, “our objective is to improve AI using brain-inspired notions in general,” meaning that we want to utilise AI to assist the brain in comprehending new information.

Neuroimaging of the Mind at Work

Therefore, we believe that this technique will assist advance the two sectors, which would not be the case if we addressed them independently.

Based on the constitution neural networks (CNNs) pushed in image classification. It has already been shown to be able to comprehend cortical responses to static images in dorsolateral regions. Here, we a little farther revealed that such CNNs can accurately understand and interpret nuclear magnetic significance image processing information from human people viewing genetic films.